Pressure Gauge Accuracy Class: 0.1 to 4.0 Selection Guide

When a supplier’s datasheet lists “Cl 1.6” or “Grade A” next to a pressure gauge model, the meaning is not always obvious, and getting it wrong has real cost. In our refinery spec reviews we see this misread weekly. This guide walks the full accuracy-class ladder from 0.1 to 4.0, maps the three standards (ASME B40.100, EN 837-1, GB/T 1226-2017) onto each other, explains the 4:1 calibration rule, and gives a 5-factor decision matrix that engineers actually use on the spec sheet.

What “Accuracy Class” Actually Means on a Pressure Gauge

Accuracy class is the maximum allowed error of a pressure gauge expressed as a percentage of the full-scale span, not as a percentage of the reading. A gauge marked Cl 1.6 with a 0–10 bar range is permitted to deviate up to ±1.6% of 10 bar = ±0.16 bar (±2.3 psi) anywhere on the dial, including a reading of 5 bar where that absolute error is the same.

Most field misreadings of class numbers stem from this single point. “Cl 1.6” does not mean ±1.6% of whatever you happen to be reading; it means ±1.6% of full scale, applied uniformly across the range.

The Standard Class Ladder: 0.1 to 4.0

Across all three major standards, accuracy classes form a fixed ladder. Smaller number = higher accuracy = higher price and (usually) larger dial.

| Class | ± error (% FS) | Typical application | Minimum dial size |

|---|---|---|---|

| 0.1 | ±0.10% | Calibration laboratory test gauges | NS 250+ |

| 0.16 | ±0.16% | Lab reference, metrology standards | NS 250+ |

| 0.25 | ±0.25% | Test gauges, secondary standards | NS 160+ |

| 0.4 | ±0.40% | Pharmaceutical, aerospace critical | NS 160+ |

| 0.6 | ±0.60% | Power generation critical loops | NS 100+ |

| 1.0 | ±1.0% | General industrial process | NS 100+ |

| 1.6 | ±1.6% | Standard industrial (most common) | NS 60+ |

| 2.5 | ±2.5% | Utility / less critical industrial | NS 60+ |

| 4.0 | ±4.0% | Compressed-air, very rough indication | NS 40+ |

Precision classes (0.1 / 0.16 / 0.25 / 0.4 / 0.6) are sometimes called “test gauges” or “standards”; industrial classes (1.0 / 1.6 / 2.5 / 4.0) are the everyday plant indicators.

ASME B40.100 vs EN 837-1 vs GB/T 1226-2017 Cross-Walk

Each region maintains its own gauge accuracy standard, and the equivalence is close but not exact. The cross-walk:

| ± error (% FS) | ASME B40.100 grade | EN 837-1 class | GB/T 1226-2017 class |

|---|---|---|---|

| 0.10% | Grade 4A | 0.1 | 0.1 |

| 0.25% | Grade 3A | 0.25 | 0.25 |

| 0.5% | Grade 2A | 0.6 | 0.4 / 0.6 |

| 1.0% | Grade 1A | 1.0 | 1.0 |

| 2-1-2% segmented | Grade A | — | — |

| 3-2-3% segmented | Grade B | — | — |

| 1.6% uniform | — | 1.6 | 1.6 |

| 2.5% uniform | — | 2.5 | 2.5 |

Two practical differences matter for cross-border procurement:

Mandatory vs advisory: EN 837-1 and GB/T 1226-2017 are mandatory: a gauge claiming compliance must meet every clause, including the same maximum error across the entire span. ASME B40.100 is advisory; manufacturers can claim a B40 grade with segmented tolerances (Grade A allows tighter ±1% in the middle 50–75% of range and looser ±2% at the ends).

Segmented vs uniform error: ASME Grade A (the most common US industrial spec) is “2-1-2%”: meaning ±2% in the bottom 25%, ±1% in the middle 50%, ±2% in the top 25% of the dial. An EN 837 Class 1.0 gauge would meet ±1.0% across the entire dial. For cross-spec procurement, treat Grade A as roughly equivalent to Class 1.6 if you need a single number for the worst case. GB/T 1226-2017 follows the EN 837 convention.

The 5-Factor Decision Matrix for Choosing a Class

In our refinery instrumentation work, the choice of accuracy class is driven by five factors evaluated in this order:

| # | Factor | What to ask | Typical class |

|---|---|---|---|

| 1 | Process criticality / SIL | Is this a safety-instrumented loop or utility indication? | SIL: 0.5; utility: 1.6 |

| 2 | Regulatory requirement | GMP, API, GB, custody transfer? | GMP / custody: 0.25–0.5 |

| 3 | Operating-pressure span ratio | Is normal reading in the 30–70% FS sweet spot? | If <30%: step up one grade |

| 4 | Calibration source available | Can you verify the gauge with a 4× more accurate reference? | Constrains down-selection |

| 5 | Economics | Each grade tighter ≈ 1.5–2× price | Trim to budget after factor 1–4 |

A working refinery rule of thumb for distillation and FCC service: utility steam and instrument-air loops use Cl 1.6, regular process measurement uses Cl 1.0, critical control points and chemical injection use Cl 0.5, and the lab / calibration bench uses Cl 0.16 or 0.25. Petrochemical critical reactor pressure with a SIL-rated trip uses Cl 0.4 with a 0.1-grade test gauge for periodic verification.

The 4:1 Calibration Rule and Why It Constrains Your Choice

ASME and most national metrology bodies recommend that the calibration reference used to verify a gauge be at least four times more accurate than the gauge under test. Selecting a Cl 1.0 process gauge implies that your calibration bench must reach Cl 0.25, and a Cl 0.4 critical-loop gauge implies a Cl 0.1 reference standard.

China’s JJG 52-2013 Verification Regulation for Spring Pressure Gauges expresses the same constraint slightly differently: the standard gauge must be at least 2–3 levels higher in accuracy class. The two rules converge in practice. If your in-house calibration capability tops out at Cl 0.25, no point specifying anything tighter than Cl 1.0; you cannot prove the better number. An ISO/IEC 17025-accredited calibration lab handles this verification chain.

The same 4:1 rule extends from mechanical-gauge class to transmitter calibration math. See our gauge pressure formula guide for how the calculation flow maps onto HM-series accuracy specs.

Dial Size, Range, and Class: The Coupling Most Spec Sheets Don’t Explain

Mechanical pressure gauges below 100 mm (4-inch) dial cannot physically achieve Cl 1.0 because the gear-train backlash and pointer angular resolution exceed 1% of full scale. The relationship is roughly:

| Nominal dial size | Highest class achievable | Typical class offered |

|---|---|---|

| NS 40 (1.5 in) | Cl 2.5 | Cl 2.5 / 4.0 |

| NS 60 (2.5 in) | Cl 1.6 | Cl 2.5 |

| NS 100 (4 in) | Cl 1.0 | Cl 1.0 / 1.6 |

| NS 150 (6 in) | Cl 0.4 | Cl 0.6 / 1.0 |

| NS 250 (10 in) | Cl 0.1 | Cl 0.25 / 0.6 |

Range over-sizing makes the problem worse: a 0–10 bar Cl 1.0 gauge reading 1 bar carries ±0.1 bar absolute error, which is 10% of the actual reading. The standard advice (keep normal operating pressure in the 30–70% FS band) exists precisely because the absolute error of an accuracy class compounds when you under-use the range.

Common Mis-Selections and How to Avoid Them

Four traps we see repeatedly in spec reviews:

- Reading “Cl 1.6” as 1.6% of reading: it is ±1.6% of full scale, applied uniformly. A 0–10 bar Cl 1.6 gauge reading 2 bar has ±0.16 bar error (8% of reading), not ±0.032 bar.

- Cross-standard substitution without checking segmentation: ASME Grade A (2-1-2% segmented) is not identical to EN 837 Class 1 (1% uniform). Specify the equivalent worst case.

- Legacy “Class 1.5”: GB/T 1226 dropped Class 1.5 in the 2010 revision; new product is Class 1.6. If old drawings call out “1.5”, new suppliers will substitute “1.6” by default. The 0.1% nominal increase rarely matters but is worth documenting on the spec sheet.

- Range-class mismatch on low operating pressure: using a 0–25 bar Cl 1.0 gauge to monitor 2-bar instrument air gives ±0.25 bar absolute, which is 12.5% of operating value. Either down-size the range to 0–4 bar or step the class up to 0.4.

When to Step Up to a Pressure Transmitter Instead

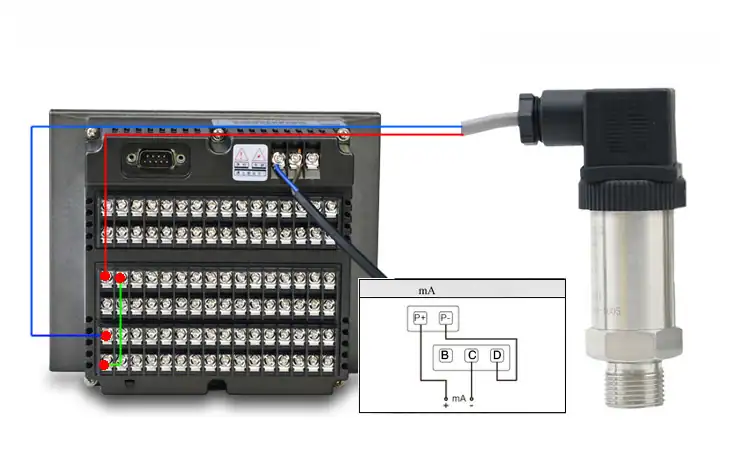

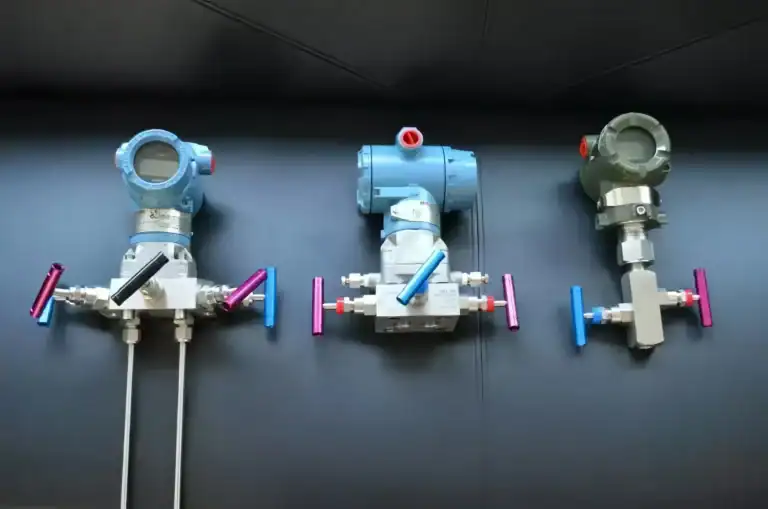

There is a natural ceiling to what a mechanical gauge can deliver, roughly Cl 0.1 at NS 250 dial. Beyond that, or when the loop needs a 4–20 mA signal into a DCS / SCADA, the right answer is a pressure transmitter, not a finer gauge class. On the P&ID that transmitter shows up as a PT, PIT, or PDT bubble — our transmitter symbol guide decodes which one fits which loop.

Modern industrial transmitters routinely achieve ±0.075% FS to ±0.1% FS, better than any Cl 0.1 mechanical gauge, while feeding the control system directly. For high-accuracy process applications, the HM22 High-Accuracy Pressure Transmitter and HM28 Sapphire Pressure Transmitter cover the precision range where mechanical gauges run out of physical resolution. For the broader product map by sensing technology, accuracy, and output, see our Pressure Transmitter Types pillar.

FAQ

Is Class 1.0 better or worse than Class 1.6?

Class 1.0 is better. The number is the maximum percent error of full scale, so a smaller number means tighter tolerance. Cl 1.0 = ±1.0% FS; Cl 1.6 = ±1.6% FS.

What does Cl 1.6 on a gauge dial mean?

It means the gauge’s maximum error is ±1.6% of the full scale span, anywhere on the dial. On a 0 to 10 bar gauge that is ±0.16 bar (±2.3 psi) absolute.

Is ASME Grade A the same as EN 837 Class 1?

Not exactly. ASME Grade A allows segmented tolerances (±2% / ±1% / ±2% across the dial), while EN 837 Class 1 holds ±1.0% across the entire range. For procurement equivalence in the worst-case region, Grade A is roughly equivalent to Class 1.6.

What is the 4:1 calibration rule?

The calibration reference must be at least four times more accurate than the gauge being verified. Specifying a Cl 1.0 gauge implies a Cl 0.25 (or better) reference standard.

Can a gauge below 100 mm dial achieve Class 1.0?

Generally no. Mechanical gauges below NS 100 are limited by gear-train and pointer resolution to Cl 1.6 or coarser. If you need Cl 1.0 in a small footprint, use a digital pressure transmitter with LCD readout instead. The same accuracy-class language carries over to dial thermometers — our bimetallic, filled, and gas-actuated temperature gauge guide walks the Class 1.0 / 1.5 / 2.5 ladder on the temperature side.

Next step: Send your spec sheet for a model recommendation in 24 h. Get in touch →